Alan Turing was a pioneer of machine learning, whose work continues to shape the crucial question: can machines think?

When Alan Turing turned his attention to artificial intelligence, there was probably no one in the world better equipped for the task. His paper ‘Computing Machinery and Intelligence’ (1950) is still one of the most frequently cited in the field. Turing died young, however, and for a long time most of his work remained either classified or otherwise inaccessible. So it is perhaps not surprising that there are important lessons left to learn from him, including about the philosophical foundations of AI.

Turing’s thinking on this topic was far ahead of everyone else’s, partly because he had discovered the fundamental principle of modern computing machinery – the stored-program design – as early as 1936 (a full 12 years before the first modern computer was actually engineered). Turing had only just (in 1934) completed a first degree in mathematics at King’s College, Cambridge, when his article ‘On Computable Numbers’ (1936) was published – one of the most important mathematical papers in history – in which he described an abstract digital computing machine, known today as a universal Turing machine.

Virtually all modern computers are modelled on Turing’s idea. However, he originally conceived these machines merely because he saw that a human engaged in the process of computing could be compared to one, in a way that was useful for mathematics. His aim was to define the subset of real numbers that are computable in principle, independently of time and space. For this reason, he needed his imaginary computing machine to be maximally powerful.

To achieve this, he first imagined there being an infinite supply of tape (the storage medium of the imaginary machine). But most importantly, he discovered a method for setting the central mechanism of the machine, which had to be capable of being set in infinitely many different ways to do one thing or another in response to what it scans on the tape, in such a way as to be able to imitate any possible setting of the central mechanism. The essential ingredient of this method is the stored-program design: a universal Turing machine can imitate any other Turing machine, only because – as Turing noted – the basic programming of the central mechanism (ie the way the mechanism is set) can itself be stored on the tape, and hence can be modified (scanned, written, erased). Thus, Turing specified a type of machine that could compute any real number, and indeed anything whatsoever, that any machine that can scan, print and erase automatically according to a given set of instructions could possibly compute; moreover, to the extent that the basic analogy with a human in the process of computing holds, anything that a human could possibly compute.

It is important to understand that the stored-program design is not only the most fundamental principle of modern computing – it also already contains a deep insight into the limits of machine learning: namely, that there is nothing that such a machine can do in principle that it cannot in principle figure out for itself. Turing saw this implication and its practical potential very early on. And he soon became very interested in the question of machine learning, several years before the stored-program design was first implemented in an actual machine.

As Turing’s Cambridge teacher, life-long collaborator and fellow computer pioneer Max Newman wrote: ‘The description that he gave of a “universal” computing machine was entirely theoretical in purpose, but Turing’s strong interest in all kinds of practical experiment made him even then interested in the possibility of actually constructing a machine on these lines.’

Article reproduced from https://aeon.co/essays/why-we-should-remember-alan-turing-as-a-philosopher

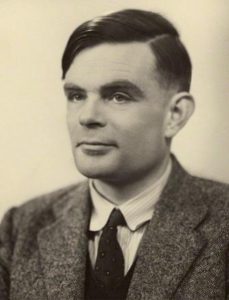

Alan Turing photographed by Elliott and Fry in 1951. Courtesy the National Portrait Gallery, London

PHI Learning books on AI and Machine Learning can be browsed respectively at

https://www.phindia.com/Books/ShowBooks/ODA/Machine-Learning

Leave a Reply